Florida Woman Arrested After Treating 4,000 Patients With Stolen Nurse License

.

.

.

The Silence After the Shock

When the case of Autumn Bardisa first broke, the public reaction moved quickly through familiar stages: disbelief, outrage, and then exhaustion. A woman had allegedly worked inside a hospital for nearly two years under a stolen nursing identity. Thousands of patients had passed through her care. A credential system designed to prevent exactly this kind of deception had failed—not once, but repeatedly.

And then, almost as quickly as it appeared, the story seemed to settle into something more uncomfortable than outrage: understanding that the system itself was not built to fail loudly. It was built to fail quietly.

What makes this case endure is not only what happened inside one Florida hospital, but what it reveals about the architecture of trust in modern healthcare. Because Bardisa did not hack a system. She did not bypass a firewall. She did something far simpler—and far more unsettling.

She walked through doors that were already open.

The Hidden Machinery of Credentialing

To understand how a case like this happens, one has to step away from the dramatic headlines and into the procedural machinery that governs healthcare hiring.

Credentialing in hospitals is not a single action. It is a layered process involving HR departments, compliance officers, licensing databases, background checks, and supervisory verification. In theory, it forms a chain of accountability where no single point of failure should allow an unlicensed individual to practice.

In practice, however, that chain is only as strong as its enforcement at each step.

Hospitals often rely on a combination of:

Self-reported documentation from applicants

State licensing verification systems

Third-party credentialing services

Internal administrative review

Each of these layers assumes that the previous one has already done its job correctly.

That assumption is where the system becomes vulnerable.

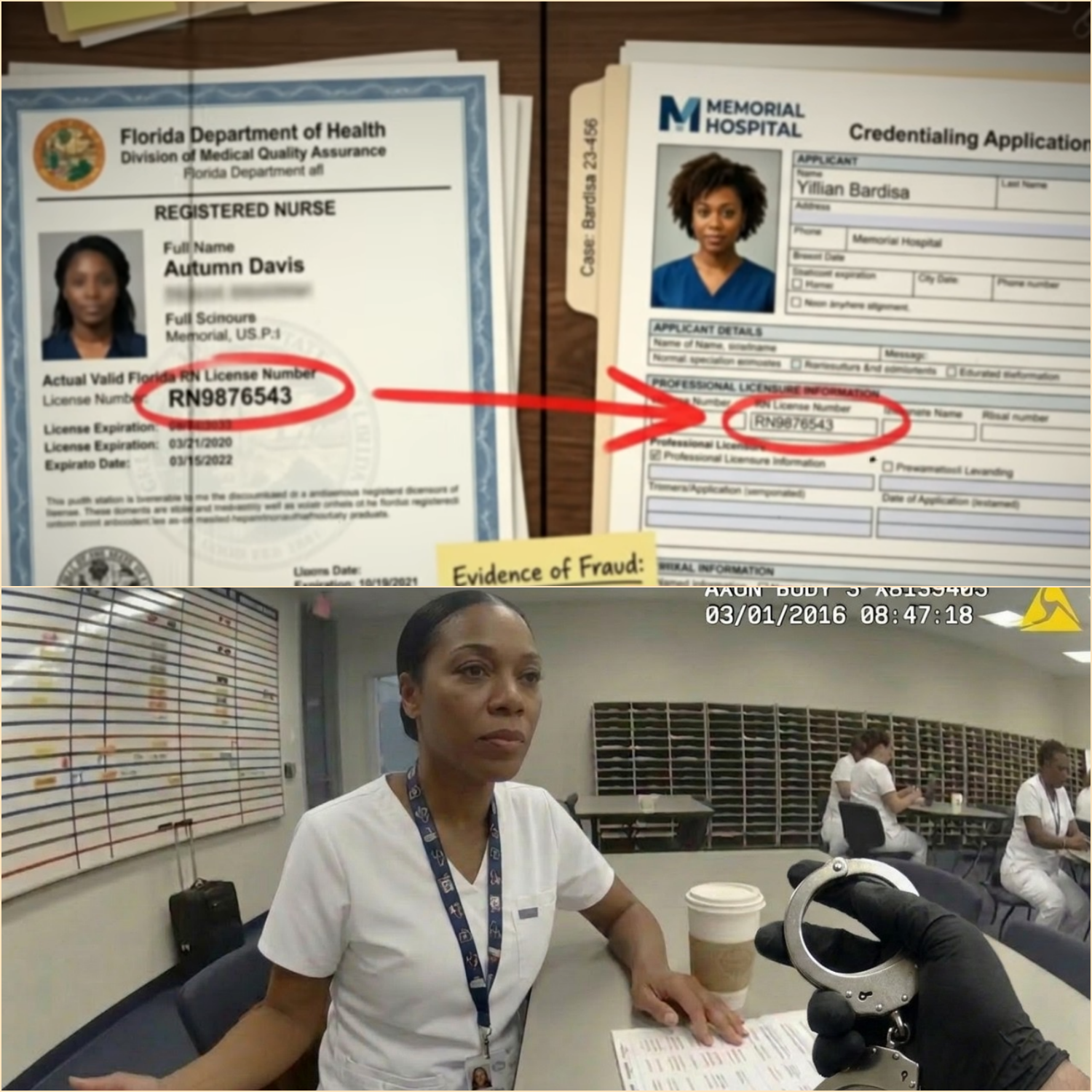

In Bardisa’s case, investigators later noted that discrepancies in her documentation were observed but not fully resolved. A missing marriage certificate. A mismatch in surname. A license number that technically belonged to a valid nurse but not the applicant.

Individually, none of these flags automatically stopped employment. Together, they should have.

But together, they were treated as administrative noise.

The Psychology of “Plausible Identity”

One of the most overlooked elements in this case is not technological—it is psychological.

Bardisa did not present herself as an obvious fraud. She presented herself as plausible.

She spoke the language of healthcare hiring. She provided a believable explanation for inconsistencies. She occupied a category that hospitals actively recruit from: the transition stage between education and full licensure.

That category—often labeled “education-first nurse” or similar—exists precisely because healthcare systems are designed to absorb new professionals before their final certification steps are complete.

This creates a structural ambiguity: a space where someone is almost a nurse, but not yet fully licensed.

Bardisa operated inside that ambiguity.

And ambiguity, in systems built for efficiency, often gets resolved in favor of continuation rather than interruption.

The Database Illusion: Why “Verification” Isn’t Always Verification

Public understanding often assumes that digital licensing databases function as absolute gatekeepers. Type a name, get a result. Green means go. Red means stop.

But real-world systems are more fragmented.

State licensing databases confirm whether a license exists—not whether the person presenting it is the rightful holder. Identity matching is not always biometric. It is often text-based: name, license number, date of birth.

That means if someone obtains or memorizes valid credential data, the system may still return a “valid” result.

This is not a software failure. It is a design limitation.

In Bardisa’s case, the license number she used was real. It belonged to another registered nurse. That nurse had no connection to the fraud. But from the perspective of a database, the credential checked out.

The system verified the license.

It did not verify the person.

The Moment the System Noticed—and Didn’t Act

One of the most critical details in the investigation is not the moment of discovery, but the moment of near-discovery years earlier.

During initial onboarding, hospital staff flagged inconsistencies between Bardisa’s stated marital name and the name associated with her license record. A routine request was made for supporting documentation.

The missing marriage certificate should have paused the process.

It did not.

Instead, the absence became a deferred task—something to be followed up on later, something assumed to be routine administrative lag.

This is a recurring pattern in institutional failure: not dramatic collapse, but incremental normalization of unresolved exceptions.

Each exception becomes slightly less urgent than the last.

Until exceptions are no longer exceptions at all.

A Workforce Under Pressure

To understand why these gaps persist, one must also understand the environment in which hospital administrators operate.

Healthcare systems in the United States—particularly community hospitals—function under persistent staffing pressure. Nursing shortages are widely documented. Patient volumes fluctuate. Recruitment pipelines are competitive and time-sensitive.

In such environments, hiring delays carry operational consequences.

A fully paused onboarding process for every documentation inconsistency may protect against rare fraud cases—but it may also delay staffing for critical units.

So institutions make trade-offs.

Most of the time, those trade-offs are harmless.

Until they are not.

The Digital Footprint That Built the Case

Once Bardisa was removed from the system, investigators reconstructed her activity through electronic records.

Every hospital interaction generates data:

Badge swipes at restricted access points

Electronic chart entries

Medication dispensing logs

Time-stamped patient notes

These systems are designed primarily for patient care continuity and liability tracking—not fraud detection.

Ironically, it was this same infrastructure that ultimately exposed the scale of the issue.

What investigators found was not a single act of impersonation, but a sustained pattern of routine clinical involvement under false credentials.

The system did not detect her presence as abnormal while she was inside it.

It only became legible as abnormal after she was removed.

The Ethical Core: Harm vs. Authority

One of the most contested aspects of the Bardisa case is the question of harm.

Hospital internal reviews did not identify confirmed patient injuries directly attributable to her actions. This fact has been cited in legal and institutional responses as a mitigating factor.

But critics argue that this framing misses the central issue.

Healthcare fraud of this type is not solely evaluated by outcome—it is evaluated by authority.

A licensed nurse is not simply someone performing tasks. They are someone legally empowered to make clinical judgments, administer medications, and act within a regulated scope of practice.

When that authority is falsified, the integrity of every decision made under that authority becomes uncertain.

Even in the absence of measurable harm, the structure of trust is compromised.

And trust, once structurally broken, cannot be retroactively restored for those affected.

Why Detection Happened at the Worst Possible Time

The irony of the case is difficult to ignore: Bardisa was discovered not through routine audit, but through promotion.

Promotion processes in hospitals often trigger full credential re-verification. They are one of the few moments where systems re-check what they previously assumed to be stable.

In other words, she was not caught when she was new.

She was caught when she became successful.

This reveals another structural flaw: verification systems are often front-loaded.

They are intense during onboarding, but significantly less rigorous during ongoing employment—unless a triggering event occurs.

That design assumes initial verification is sufficient to guarantee future legitimacy.

Bardisa’s case demonstrates the fragility of that assumption.

The Real Nurse in the Background

There is another layer to this case that remains largely outside public view: the real registered nurse whose license number was used.

That nurse, working within the same healthcare system but at a different facility, was unaware for nearly two years that her professional identity had been duplicated and used elsewhere.

When informed, she became an unwilling participant in an investigation that had already been unfolding for months.

Her situation highlights a lesser-known vulnerability: professional identity theft in regulated fields does not always immediately affect the victim’s employment—but it can quietly attach their credentials to actions they never performed.

The long-term implications of that kind of identity contamination are still not fully addressed in most regulatory frameworks.

Institutional Response: Reform or Routine Adjustment?

Following the case, hospital systems across the country issued statements emphasizing continued commitment to credentialing integrity.

But internally, responses have been more complex.

Common reforms under discussion include:

Mandatory re-verification of licenses at fixed intervals

Improved cross-checking between HR and compliance systems

Enhanced identity verification using multi-factor authentication

Automated alerts for mismatched credential metadata

Yet each of these solutions carries operational cost, administrative burden, or technical limitations.

More importantly, none of them eliminate the underlying issue: systems that rely on documentation integrity assume honesty at the entry point.

If that assumption fails, downstream safeguards often activate too late.

The Uncomfortable Truth About Trust Systems

The Bardisa case ultimately forces a confrontation with a difficult truth: large institutions do not verify reality continuously. They verify it periodically.

Between those checkpoints, they operate on trust.

Trust in documentation. Trust in databases. Trust in human compliance.

This is not a flaw unique to healthcare. It exists in aviation, finance, education, and government licensing systems.

But healthcare carries a unique weight because the consequences are immediate and personal.

A broken verification system in another industry may result in financial loss.

In healthcare, it affects human vulnerability at its most exposed moments.

What Cannot Be Measured

There is one dimension of this case that resists quantification.

It is not the number of patients.

It is not the duration of employment.

It is not even the legal outcome.

It is the experience of those 4,486 patient interactions—each one framed by a belief that the person providing care was licensed, qualified, and accountable under professional standards.

Even if no physical harm is documented, the psychological reality of that deception cannot be fully measured.

It exists in hindsight.

In uncertainty.

In the quiet question many patients may never ask out loud: Who was actually in that room with me?

Closing Reflection: A System That Worked—Until It Didn’t

The most unsettling conclusion of the Bardisa case is not that the system failed completely.

It is that the system worked—exactly as designed.

It processed applications. It flagged inconsistencies. It requested documentation. It escalated when a promotion triggered re-verification. It involved law enforcement. It produced an arrest, prosecution, and sentencing.

At every stage, the system responded.

But it responded after the fact.

Not before.

Not during.

After.

And in a profession built on preventing errors before they reach the patient, timing is not a detail—it is the entire safeguard.

Final Transition Into the Larger Question

The story of Autumn Bardisa does not end with a conviction or a probation sentence. It ends in a larger, unresolved space where institutional design, human judgment, and systemic pressure intersect.

Because the question now is no longer simply how one individual entered a hospital without a valid license.

The question is how many systems—across how many institutions—might be operating under similar assumptions of correctness that have never been fully tested.

And whether the next case, wherever it emerges, will be discovered in time—or only after thousands more silent interactions have already taken place.

News

PART 2 Rookie Cop Tows ‘Illegally Parked Car’ — It’s The Judge’s Car outside his Own Court

Rookie Cop Tows ‘Illegally Parked Car’ — It’s The Judge’s Car outside his Own Court . . . PART 2 — THE AFTERMATH OF POWER, BIAS, AND THE SYSTEM THAT ANSWERS TO BOTH On the morning after the Brookwood County…

Rookie Cop Tows ‘Illegally Parked Car’ — It’s The Judge’s Car outside his Own Court

Rookie Cop Tows ‘Illegally Parked Car’ — It’s The Judge’s Car outside his Own Court . . . 🇺🇸 Rookie Cop Tows “Illegally Parked Car” — The Judge’s Vehicle Outside His Own Courthouse Sparks a $17M Legal Firestorm On a…

PART 2 Racist Flight Attendant Gets Black Passenger Arrested — Moments Later She Learns He’s an FBI Agent

Racist Flight Attendant Gets Black Passenger Arrested — Moments Later She Learns He’s an FBI Agent . . . 🇺🇸 PART 2 — The Fallout, The Files, and the System Beneath the Silence What initially appeared to be a contained…

Racist Flight Attendant Gets Black Passenger Arrested — Moments Later She Learns He’s an FBI Agent

Racist Flight Attendant Gets Black Passenger Arrested — Moments Later She Learns He’s an FBI Agent . . . 🇺🇸 Viral First-Class Confrontation Sparks National Debate on Racial Profiling and Corporate Accountability What began as an ordinary domestic flight from…

PART 2 Officer Initiated A Storefront Confrontation… Ending In A Strict 13-Year Prison Term!

Officer Initiated A Storefront Confrontation… Ending In A Strict 13-Year Prison Term! . . . 🇺🇸 PART 2 — THE UNRAVELING OF A BADGE, THE TRUTH BEHIND THE FLOOR, AND THE PRICE OF A WRONG ASSUMPTION The ambulance doors closed…

Officer Initiated A Storefront Confrontation… Ending In A Strict 13-Year Prison Term!

Officer Initiated A Storefront Confrontation… Ending In A Strict 13-Year Prison Term! . . . 🇺🇸 PART 1 — “The Moment a Routine Store Call Turned Into a Federal Incident” In Virginia Beach, what began as a routine grocery stop…

End of content

No more pages to load